Why innovation in computing has been stymied for decades

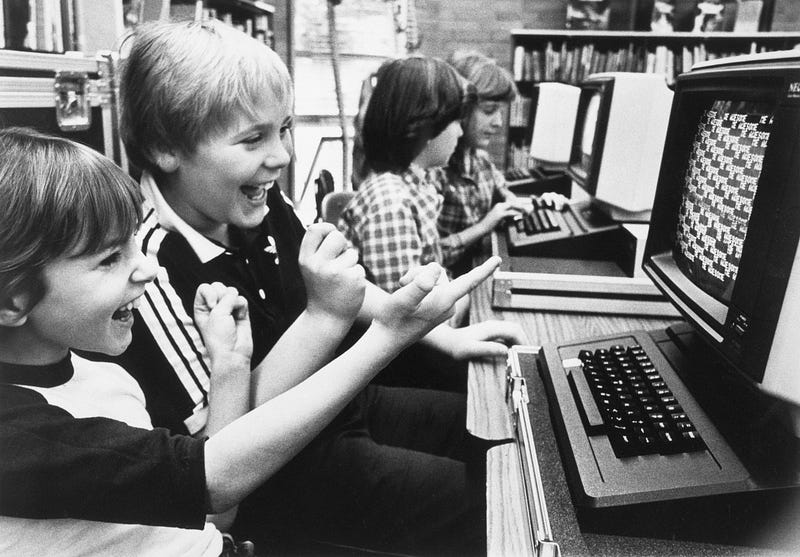

Credit: Bettmann/Getty

Credit: Bettmann/GettyThis is my current desktop wallpaper:

For those who don’t recognize it, that’s the Xerox Alto, the first personal computer from the 1970s and, arguably, the source of the “desktop” metaphor for computer interfaces.

I don’t believe the Alto should have never happened as much as I did when I first created the wallpaper. If I did it again today, the computer pictured would be a first-generation Macintosh, not an Alto. After all, the mistakes that ruined graphical user interfaces (GUIs) forever didn’t happen at Xerox’s Palo Alto Research Center, aka PARC. They were dictated by Steve Jobs to a team that already knew better than to think any of it—the rejection of composition, the paternalism toward users, the emphasis on fragile, inflexible demo-tier applications that look impressive in marketing material but aren’t usable outside extremely narrow and artificial situations — was okay.

In one sense, the computer revolution is over because the period of exponential growth behind the tech ended 10 years ago. In another sense, it hasn’t…