Introduction

On April 6th 2022, notdan posted an interesting challenge on his twitter. He was skeptical about recent claims that tools could deobfuscate images of text which had been blurred for redaction purposes. If possible, this vulnerability could be exploited across the many examples of blurred credit card numbers, dollar amounts, PII, and other sensitive information across the internet. The claims didn’t live up to his expectations, and he was confident a general solution to deobfuscation didn’t exist.

To put his claim to the test he created the Pixel Challenge with a bounty of $100.

The very next day, notdan doubled down (actually ten-fold) on his beliefs by raising the stakes of the challenge to a very generous $1000.

So It Begins

The Twitter algorithm did its magic, and my good friend the Benevolent Orangutan (@BenevOrang) saw the tweet and shot me a message.

@BenevOrang kicking things off

@BenevOrang kicking things offThe next morning he had already started experimenting with evolutionary algorithms (his personal favorite technique) and was bouncing a few ideas off of me. I must admit, like notdan I was very skeptical this would be possible. I mean look at how blurry that image is!

When asked for final thoughts on his game plan, I was less than encouraging:

not good advice

not good adviceFortunately, he kept the conversation alive, and I took a minute to think about how I would actually tackle something like this.

much better advice (even with typos)

much better advice (even with typos)Image Super-Resolution

In recent years, the task of image super-resolution has been actively researched by the deep learning community. The goal of this super-resolution research is to use deep neural networks to increase the resolution of lower quality images.

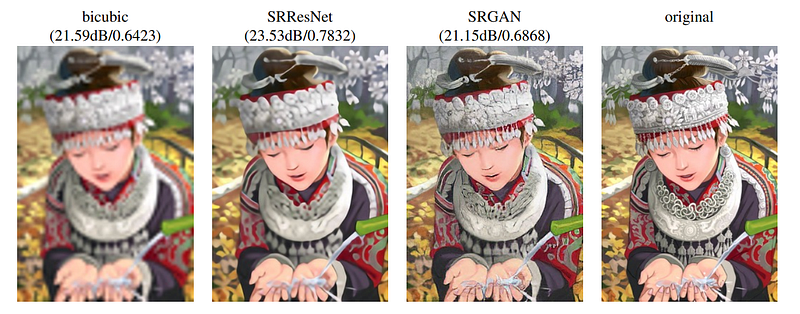

Left to Right: Low Resolution (4x), Outputs from two SR nets, High Resolution - from: https://arxiv.org/abs/1609.04802

Left to Right: Low Resolution (4x), Outputs from two SR nets, High Resolution - from: https://arxiv.org/abs/1609.04802Most applications of super-resolution focus on improving general image quality, as perfect high resolution recovery is impossible with the loss of information at lower resolutions. I am aware of very little research into using super-resolution for text recovery, other than a few papers dealing with text found in real scenes (street signs, building names, etc.). Regardless, I figured the techniques used for general image super-resolution, in principle, should apply here as well.

I Want to Have Fun Too

I had taken the day off to prepare for some overseas travel and spent the rest of the morning packing and getting updates about my friend’s attempts. By mid-morning I was convinced he was having more fun than me and so decided to start writing some code.

In another tweet, notdan had disclosed that the image was created with a Photoshop mosaic filter. I wasn’t familiar with the filter, so did some searching on how it’s implemented. My initial implementation created the pixelized effect by first down-sampling an input image and then up-sampling it back to the original resolution using nearest neighbor interpolation. I examined the challenge image to help estimate how much down-sampling would be required to produce a similar pixelization. The technique was a bit crude, but seemed like an okay starting point.

I also needed a way to produce training examples. I wrote some code using the python Pillow library for generating new images with white backgrounds and black text. I didn’t know what font was used in the challenge (yet), so I grabbed something generic off of my system. To produce a new image, the code selected a few ascii characters at random and centered them on the frame. I experimented with different font sizes while using my mosaic blur until I was routinely getting examples with words taking up three large pixels (just like the challenge image).

Armed with the ability to generate an infinite amount of high res and pixelated training examples, I was ready to find a suitable neural network for the job. I did a quick search and settled on a pytorch implementation of the Super-Resolution Resnet described in the paper Photo-Realistic Single Image Super-Resolution Using a Generative Adversarial Network by Ledig et al. (ironically from Twitter Research). I slightly modified the network’s architecture to work on black and white images and manipulated how much up-sampling would occur inside the network.

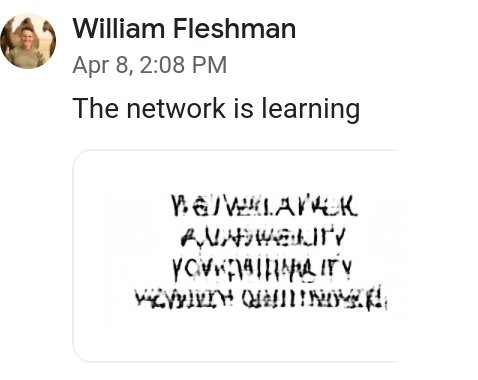

I wrote a wrapper class around my data generation function to comply with pytorch’s Dataset-Dataloader model and knocked out the pytorch boiler plate code for setting up a training loop. On each iteration, my network would attempt to recover 32 low resolution images. The output of the network would be compared to the ground truth, and the weights of the network were adjusted via gradient descent in order to improve the result. After each epoch of training (1024 random images in this case), I would run the challenge image through the network to look at the results. The output from the first epoch got me pretty excited!

Is that Ancient Sumerian???

Is that Ancient Sumerian???As training continued it became obvious there were some issues. I figured the process I was using to generate the training examples wasn’t good enough, but the day was over, and I was getting on a plane soon. I sent @BenevOrang all of my code and wished him good luck while I was away.

A GIF of that original training run. Pretty horrible, but you can actually see “NOBODY” in retrospect.

A GIF of that original training run. Pretty horrible, but you can actually see “NOBODY” in retrospect.Experiments

Motivated by the initial results, my friend continued to build off my code base while I was away. He sent me periodic updates of his experiments modifying the generation process, and by the end of his experimentation the phrase “VULNERABILITY FOR CREDIBILITY” was readable. Even “FAUX” is fairly recovered, but we didn’t even notice (who uses faux??).

Output from @BenevOrang’s trained model

Output from @BenevOrang’s trained modelI found the following experiments significantly notable and provide my justification for why:

1. Using a dictionary to produce words instead of random strings

During the training process the network will learn common patterns in the data. By using words from the English language the network will be able to better correlate common character pairs like “QU” and be less likely to produce uncommon pairs “YJ” it has never seen. Theoretically, this statistical knowledge could allow the network to correctly reconstruct under circumstances where multiple (but mostly jibberish) character sequences blur to the same pixelization.

2. Randomly selecting the font

Randomly rotating through fonts during training allows the network to become robust to font selection. While this makes the training more difficult, it allows for some uncertainty in the font used for the challenge. He had actually predicted the correct font early in the competition by looking up Adobe defaults which definitely boosted results. By the time I came back notdan had disclosed which font he used which simplified this aspect of the modeling.

3. Randomizing location of words in the images

If I had to guess, this was one of the most important things my initial generation process didn’t include. My friend had noticed that the same word would have different pixelizations depending on where the word was placed in the image. This caused me to take a deeper look into how my mosaic filter was implemented. Instead of my original method, it’s actually better to think about the filter taking a grid size as input. Then the filter takes each grid cell of pixels and replaces their value with the average for that cell. This is why placement of the text is important. For example, if a character is moved up a single pixel then the top pixels of the character might cross into a new grid cell. The new grid cell would get darker, and the original grid cell will get lighter because of the lost character pixels.

Reverse Engineering and Brute Force

I got back from my trip on a Sunday and work kept me busy throughout the week. My friend occasionally asked if I’d made progress and by the next Sunday had motivated me to pick up where I had left off. Armed with new insights, I reimplemented my mosaic blur using the grid method. Since he had recovered the word “vulnerability” I decided to use it as a crib to improve my own generation process. I set up an optimization loop that iterated over different font sizes and offsets while creating a mosaic blur of my generated text. Each iteration measured the distance between my version and the challenge image trimmed down to just that section. The optimization uncovered the best parameters and resulted in an almost perfect reconstruction:

Top: “VULNERABILITY” produced by my generation. Bottom: “VULNERABILITY” produced by notdan

Top: “VULNERABILITY” produced by my generation. Bottom: “VULNERABILITY” produced by notdanNow that I had a method for creating almost identical examples, I figured I could brute force my way to the solution. I reused the same code for finding optimal font size and offsets but kept the font size fixed to what I previously discovered. I then added logic to loop over every possible character and measure the distance between the added pixels and the corresponding pixels in the other challenge words. Instead of checking a single character at a time, I looped over pairs to account for the blending that occurs from the pixelization. I kept the first character of each optimal pair and then repeated the process for the next character in the word.

To my great excitement, this brute force technique spit out the answers for lines two through four — “VULNERABILITY FOR CREDIBILITY NOBODY QUESTIONED”. The results for the top line looked like gibberish, a sequence of random characters ending in “AFAUX” (seriously, who uses the word faux!?).

Final Touches

Motivated by being so close to the complete answer, I fired up my GPU once more and watched my electric bill continue to rise. I had replaced the data generation process with my improved mosaic blur, used a dictionary to generate the words with the correct font and size, and chose the locations in the images randomly. The rest of my initial code base remained the same and the results were markedly better. I was finally able to read off the top line “THIS WAS A FAUX”.

Time lapse of final training run.

Time lapse of final training run.Claiming the Prize

I sent notdan the results that night via DM and posted the winning tweet the next morning:

Later that day notdan confirmed the win and in extremely generous fashion sent over the prize money + a bonus:

https://twitter.com/notdan/status/1518934377165590528

https://twitter.com/notdan/status/1518934377165590528Closing Thoughts

Twitter reactions to the results were a mix of amazement and fear. In general, I think the results do support the claim that pixelization is not a secure means of redaction. Just because the output is unrecognizable, what’s left can be thought of as an alphabet in a different language understandable (or learnable) by algorithms. Some information is lost through the process, but recoveries are still possible when paired with statistical information about the natural language.

On the other hand, I think notdan was correct in his skepticism. As shown here, there is still a lot of fine tuning to the application that’s required for success. General solutions to arbitrary text super-resolution are unlikely due to numerous possible variations in background/text color, size, font, etc. The vulnerability should still be taken seriously, since it is often easy to derive these features for a specific targeted attack.

Acknowledgements

A huge thank you to notdan for putting on this super interesting challenge. This is an incredible way to produce and share knowledge with the wider InfoSec community. Everyone buy him a coffee or send some XMR his way. Checkout his medium profile, twitter, and show HACKER.REHAB.

Finally, thanks to the Benevolent Orangutan for discovering the competition, sharing it with me, motivating me to keep working on it, and providing the numerous insights and great back and forth throughout.